Transfer learning is a powerful machine learning technique that enables models trained on one task to be adapted to new tasks with minimal additional training. This article comprehensively explores transfer learning, elucidating its foundational principles, methodologies, practical applications, and future directions.

Understanding Transfer Learning

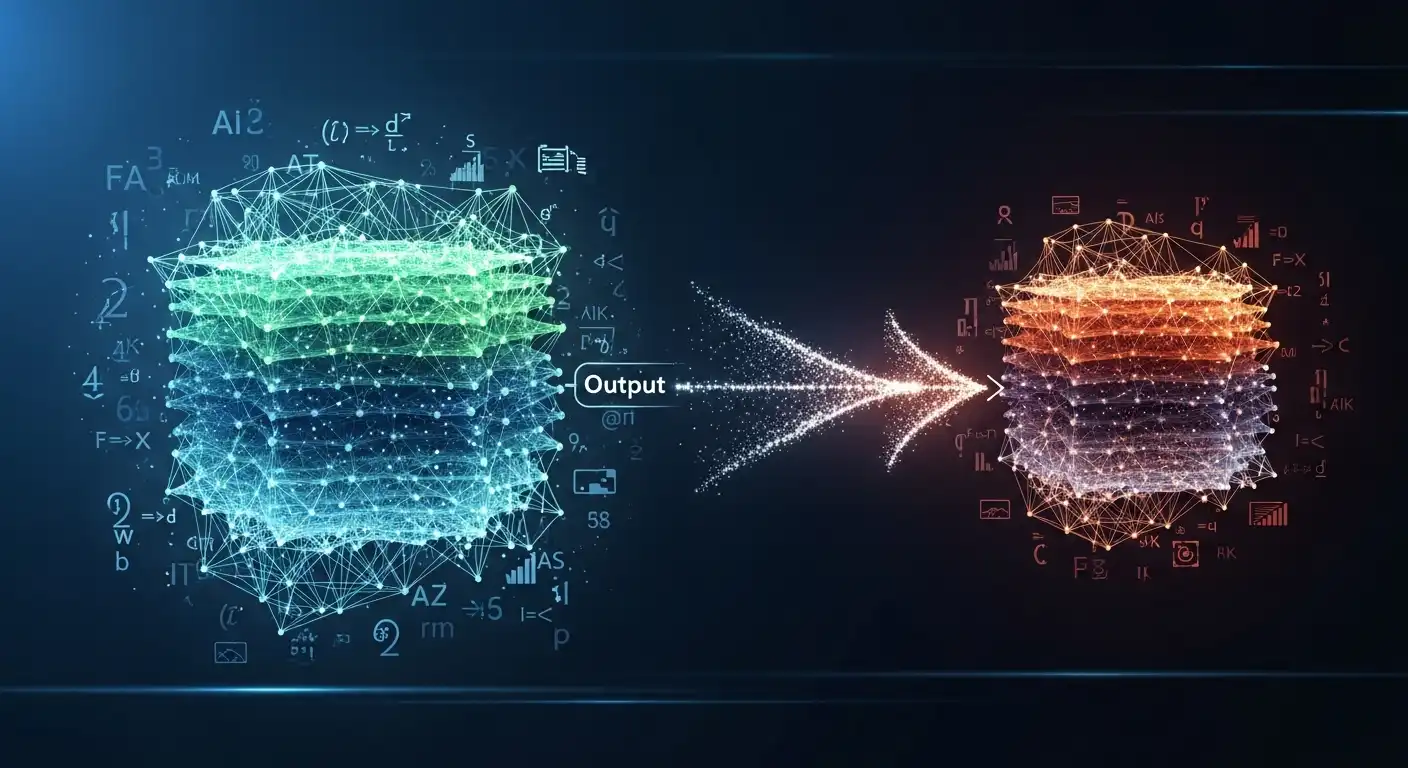

Transfer learning involves leveraging knowledge gained from solving one task to improve performance on a related task. Instead of training a model from scratch for each new task, transfer learning enables the reuse of pre-trained models or learned representations, thereby accelerating training and enhancing generalization.

Core Concepts of Transfer Learning

The core concepts of transfer learning concern transferring knowledge from a source domain to a target domain. It involves extracting meaningful representations from the source data, adapting these representations to the target domain, and fine-tuning the model parameters to optimize performance on the target task.

Types of Transfer Learning

Transfer learning can be categorized into several types based on the relationship between the source and target tasks. These include domain adaptation, in which the source and target domains differ but share underlying features, and multitask learning, in which the model is trained on multiple related tasks simultaneously to leverage shared knowledge.

Benefits of Transfer Learning

Transfer learning offers several benefits, including improved model performance, reduced training time and data requirements, and enhanced generalization to new tasks and domains. Transfer learning enables models to achieve higher accuracy and robustness by leveraging knowledge acquired from previous tasks.

Methodologies in Transfer Learning

Transfer learning encompasses various methodologies and techniques for transferring knowledge between tasks and domains, each tailored to address specific challenges and requirements.

Feature Extraction

Feature extraction involves extracting relevant features or representations from the input data using pre-trained models or layers. These features capture high-level patterns and structures in the data and can be transferred and fine-tuned for the target task, thereby enhancing model performance and efficiency.

Fine-Tuning

Fine-tuning entails adjusting the parameters of a pre-trained model for a new task or domain by updating its weights using task-specific data. This process allows the model to adapt its learned representations to the target task, improving performance while retaining knowledge from the source domain.

Domain Adaptation

Domain adaptation focuses on adapting models trained on a source domain to perform best on a target domain with different distributional characteristics. Techniques such as adversarial training, domain-adversarial neural networks (DANNs), and domain-specific normalization layers help align feature distributions across domains, facilitating knowledge transfer and adaptation.

Applications of Transfer Learning

Transfer learning finds diverse applications across various domains and industries, enabling more efficient and effective solutions to complex problems.

Natural Language Processing

In natural language processing (NLP), transfer learning techniques, such as pre-trained language models (e.g., BERT, GPT), have revolutionized tasks including sentiment analysis, text classification, and machine translation. By fine-tuning pre-trained models on domain-specific data, NLP practitioners can achieve state-of-the-art performance with minimal annotation effort.

Computer Vision

Transfer learning has been instrumental in advancing computer vision tasks such as photo classification, object detection, and semantic segmentation. Pre-trained convolutional neural networks (CNNs) such as VGG, ResNet, and MobileNet serve as feature extractors, enabling transfer learning for downstream tasks with limited labeled data.

Healthcare and Biomedical Imaging

In healthcare and biomedical imaging, transfer learning facilitates the development of diagnostic models for tasks such as medical image analysis, disease detection, and prognosis prediction. By leveraging pre-trained models trained on large-scale image datasets, researchers can transfer knowledge to medical imaging tasks with limited annotated data, improving diagnostic accuracy and patient outcomes.

Future Directions of Transfer Learning

As transfer learning continues to evolve, future research should address emerging challenges and expand the scope of applications across domains.

Lifelong Learning and Continual Adaptation

Lifelong learning and continual adaptation represent key research directions in transfer learning. They focus on developing models to continually learn and adapt to new work and environments. By integrating mechanisms for knowledge retention, transfer, and adaptation, lifelong learning systems can evolve and improve performance with experience.

Meta-Learning and Few-Shot Learning

Meta-learning techniques enable models to learn how to learn, allowing them to quickly adapt to new tasks with minimal data or training examples. Few-shot learning approaches, such as model-agnostic meta-learning (MAML) and gradient-based meta-learning, enable models to generalize from a few examples, enhancing their ability to transfer knowledge to novel tasks.

Cross-Modal Transfer Learning

Cross-modal transfer learning explores knowledge transfer between different modalities, such as text, images, and audio. By leveraging shared representations across modalities, transfer learning models can transfer knowledge and learn from diverse data sources, enabling more robust and versatile AI systems for multimodal applications.

Conclusion

Transfer learning is a versatile and powerful technique in machine learning, enabling the efficient reuse of knowledge across tasks and domains. Transfer learning accelerates training, improves performance, and enhances generalization in AI systems by leveraging pre-trained models, learned representations, and transferable knowledge. As research advances, transfer learning promises to unlock new capabilities and applications across diverse domains, shaping the future of AI and machine learning.