In a groundbreaking development presented at the IEEE International Electron Devices Meeting (IEDM 2023), researchers from Tohoku University and the University of California demonstrated proof of concept for an energy-efficient computer designed for current AI applications. This innovative computing approach relies on the stochastic behavior of nanoscale spintronics devices, which are particularly suitable for addressing probabilistic computation challenges, such as inference and sampling, commonly encountered in machine learning (ML) and artificial intelligence (AI).

With Moore’s Law facing limitations, there is an escalating demand for domain-specific hardware. Probabilistic computing, utilizing naturally stochastic components like probabilistic bits (p-bits), has emerged as a promising solution due to its potential to tackle complex tasks in ML and AI efficiently. Like quantum computers tailored for inherently quantum problems, room-temperature probabilistic computers excel at handling intrinsically probabilistic algorithms, crucial for various AI applications.

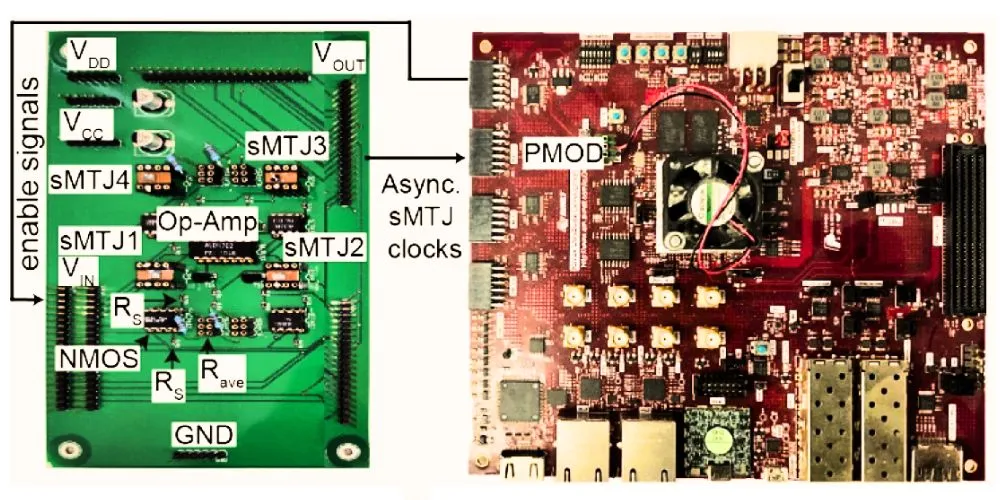

The researchers showcased the viability of robust and fully asynchronous (clockless) probabilistic computers at scale by employing a stochastic magnetic tunnel junction (sMTJ), a probabilistic spintronic device interfaced with powerful Field Programmable Gate Arrays (FPGA). While previous iterations were limited to implementing recurrent neural networks, the team achieved a crucial milestone by demonstrating the implementation of feedforward neural networks, foundational to modern AI applications.

In this breakthrough, the researchers achieved two significant advancements. First, they leveraged earlier work on stochastic magnetic tunnel junctions, demonstrating the fastest p-bits at the circuit level using in-plane sMTJs, fluctuating approximately every microsecond—three orders of magnitude faster than previous reports. Second, by enforcing an update order at the hardware level and utilizing layer-by-layer parallelism, they successfully demonstrated the basic operation of a Bayesian network, exemplifying feedforward stochastic neural networks.

While the current demonstrations are small, the researchers anticipate scalability by leveraging CMOS-compatible Magnetic RAM (MRAM) technology. This scalability holds the potential for substantial advances in machine learning applications and the efficient realization of deep/convolutional neural networks. Professor Shunsuke Fukami, the Principal Investigator at Tohoku University, emphasized that these designs can be upscaled, unlocking the door to enhanced computational capabilities in AI applications.

This breakthrough signifies a pivotal step toward implementing energy-efficient probabilistic computing for AI, addressing the evolving landscape of computing challenges in the field.